My attention was drawn by Anil Dash on Twitter to two posts discussing the purported and actual capacities of devices that, for example, advertise “64GB of storage.”

Marco Arment linked to an article on The Verge, asserting Microsoft’s 64GB Surface Pro will have only 23GB of usable space. Arment went on to suggest that device manufacturers should be required to market devices based on the “amount of space available for end-user” data.

Ed Bott’s take, on the other hand, was that Microsoft’s Surface Pro is more comparable to a MacBook Air than other tablets, and its baseline disk usage should be considered in that context.

I think each of Arment’s and Bott’s analyses are useful. It would be nice, as Arment suggests, if users were presented with a realistic expectation of how much capacity a device will have after they actually start to use it. And there is merit in Bott’s defense that a powerful tablet, using a more computer-scale percentage of a built-in disk’s storage, should be compared with other full-fledged computers.

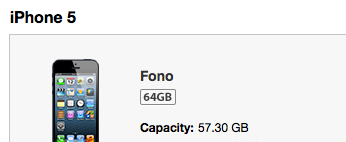

Let’s just say if fudging capacity numbers was patented, every tech company would be in hot water with the patent trolls. A quick glance at iTunes reveals that my allegedly 64GB iPhone actually has a capacity of 57.3GB.

I don’t know precisely what accounts for this discrepancy, but I can guess that technological detritus such as the metadata used by the filesystem to merely manage the content on the disk takes up a significant amount of space. On top of that, the discrepancy may include space allotted for Apple’s operating system, bundled frameworks, and apps. Additional features such as recovery partitions always come at the cost of that precious disk space. Nonetheless, Apple doesn’t sell this 64GB iPhone as the 57.30GB iPhone. No other company would, either.

It seems that in the marketing of computers, capacity has always been cited in the absence of any clarification about actual utility. One of my first computers (after my Timex Sinclair 1000) was the Commodore 64, a computer whose RAM capacity was built in to the very marketing name of the product. Later, Apple followed this scheme with computers that would be known as the Mac 128K and Mac 512K. Each alluding to its ever-increasing RAM capacity.

The purported RAM capacity was somewhat misleading. Sure, the Commodore 64 had 64K of RAM, but some of that had to be used up by the operating system. Surely it would not be possible to run a program that expects 64K of RAM, and have it work. So was it misleading? Yes, all marketing is misleading, and just as it’s easier to describe an iPhone 5 as having 64GB capacity, it was easier to describe a Commodore as having 64K, or a Mac as having 128K of RAM.

But the capacity claims were more honest than they might have been, thanks to the pragmatic allure of storing much of a computer’s own private data in ROM instead of RAM. Because in those days it was much faster to read data from ROM than from RAM, there was a practical, competitive reason for a company to store as much of its “nuts & bolts” code in ROM. The Mac 128K shipped with 128K of RAM, but also shipped with 64K of ROM, on which much of the operating system’s own code could be stored and accessed.

Thanks to the ROM storage that came bundled with computers, more of the installed RAM was available to end users. And thanks to the slowness of floppy and hard disks, not to mention the question of whether a particular user would even have one, disk storage was also primarily at the user’s discretion. It was only after the performance of hard drives and RAM increased that the allure of ROM diminished, and computer makers turned gradually away from storing their own data separately from the space previously reserved for users. With the increasing speed and size of RAM, and then with the advent of virtual memory on consumer computers, disk and RAM storage graduated into a sort of virtual ROM.

The transition took some time. Over the years from that Mac 128K, for example, Apple did continue to increase the amount of ROM that it included in its computers. I started working at Apple in an era when a good portion of an operating system would be “burned in ROM”, with only the requisite bug fixes and updates patched in via updates that were loaded from disk. I haven’t kept up with the latest developments, but I wouldn’t be surprised if the ROM on modern Macs is only sufficient to boot the computer and bootstrap the operating system from disk. For example, the technical specifications of the Mac Mini don’t even list ROM among its attributes. The vast majority of capacity for running code on modern computers is derived from leveraging in tandem the RAM and hard disk capacities of the device.

So we have transitioned from a time where the advertised capacity of a device was largely available to a user, to an era where the technical capacity may deviate greatly from what a user can realistically work with. The speed and affordability of RAM, magnetic disk, and most recently SSD storage, have created a situation where the parts of a computer that the vendor most wants to exploit are the same that the customer covets. Two parties laying claim to a single precious resource can be a recipe for disaster. Fortunately for us customers, RAM, SSD, and hard disks are cheaper than they have ever been. Whether we opt for 64GB, 128GB, or 1TB, we can probably afford to lend the device makers a little space for their virtual ROM.